Google gets all the bashing but why?

I have been watching Google ever since I started my online Business. I have seen major Google updates for a period of over 7 years almost all the updates were aimed at protecting their algorithm and getting rid of Spam and sites that entertain aggressive search engine ranking tactics. Today Google has changed into a highly quality search engine with good results. If they were not targeting the aggressive search engine optimization people they will not be what they are today.

Hottest topic in today's SEO world is the Google's ability to detect and penalize paid links. Whether you buy it or sell it if you get caught by Google police you are gone. Once in a SEOmoz post Matt cuts replied to Rebecca's post where he talks about natural links being like very strong tires and paid or other artificial links as week tubes / tires than can burst any time. It's actually true and from what I have seen every site that got affected for links had some sort of problem with artificial links.

Personal experience

Our own site had some problem with Google rankings when we created the search engine promotion widget and got lots of backlinks without knowing we were abusing it. Then we were hit with ranking filter which prevented our site from being in top 10. Did we whine? Well know personally we were not aware that widget links can hurt a site. We were not abusing the system in any way with widgets we spent money on our widgets and the only way we get back our investment is by links. We do that for all our tools but Google never complained on it but when we redirected the links from widgets to our Homepage Google algorithm got angry with our site and reduced our rankings.

What did we do?

We never whined we made all the widget links optional no-follow, cleaned up some links to homepage, removed link to homepage and added it to the widget page directory, checked for any other potential problem with our website and submitted a re-consideration review and in 1 month we were back in rankings.

So was Google wrong with our website?

Ofcourse no even though we thought widget links when not abused will not affect rankings still we shouldn't have linked to the homepage with keywords. It's our mistake and Google has every right to make us regret for this mistake their own way. But Google were nice, in fact very nice after rectifying our mistake and explaining them we got back to rankings. So Google definitely want us back in their rankings. Over 4000 people use our SEO tools (https://www.searchenginegenie.com/seo-tools.htm ) and out of that almost 2000 come from search engines. Google knows that and they know our tools get lot of traffic from them and they are happy to send people because people like it.

We don't come under link buying / selling category

We never bought a single link to our site almost all of them are links to our tools, widgets and some custom built links through articles, directories, blogging etc. We don't buy links but still hit with a link buying / selling detection algorithm. Was Google wrong in doing this? Ofcourse no why because abusing a widget Is same like buying links. Those links are not editorially given links, people linked to us in exchange for our widget. They didn't link to our homepage because they liked our site. We understand / I understand and when everyone in our company understands Google's position we are all good with anything Google decides. But not everyone take it that way I see so much Google bashing out there when something Google does to protect itself and its algorithm.

Being SEO is nothing to be proud of.

Some people think SEO is something great and they are the best in the world. I'll tell you in Google point of view most of the SEOs are very close to spammers. Not everyone but most I said, including places like SEOmoz which is popular among SEOs discuss so much link buying / selling. Even Rand fishkin is an active support of Text-link-ads and he also supports buying / selling links for ranking. If this industry supports so much text link buying / link selling for ranking purposes and Google tries to defend itself is it wrong? For most SEOs yes Google is wrong. I would call that **** ****. Without Google you would have never existed, who are you to give commands to Google? The massive improvement by Google in transparency with webmasters and Google has helped webmasters a lot. But still webmasters and SEOs want more and more. They don't want Google to penalize link buying, selling and other sort of aggressive and abusive link building tactics. I would say better leave the SEOs to run the search engine they know how difficult it is. Even the so called Google supporters abuse the search engines when they loose rankings. If you lost your ranking see the mistake you did. Rectify your mistakes, fix them and ask Google to reconsider rather than whining that Google is useless.

Confession from a SEO.

I am in this industry for more than 7 years. Am I proud to be a SEO? No never this industry is hated by so many people including the search quality engineers themselves. I am passionate about search engines I like them, I like the miracle algorithm that works behind it, I like all the PHDs. I personally wanted to become a scientist which never happened. I want to be friends of search engineers not for SEO benefit but to admire and gain knowledge from the wonderful work they do. I sometimes wonder why I came into this SEO industry. Truth I came into SEO from my programming background only for the money involved. This industry has so much money involved than programming and web design. People will pour money if they get good business from search engines. I have seen that practically in some PPC campaigns our company handles. Some big clients spend around 100,000$ a month for PPC. Though 'not the same case in SEO still the rewards are high. But I am always looking alternate ways because I am not the bad guy type who goes after money. I like to earn money in a way everyone appreciates. Not in a way everyone glares at you. To all the SEOs out there realize the type of work you are doing and please give respect to my loveable search engine. If Google never existed I wouldn't be here running a Business in SEO. Love Google and appreciate everything they do whether its right or wrong. Everyone appreciates if Google does something right and everyone bashes if they do something harsh to protect their algorithm. Love Google and all its efforts.

My suggestion to all the SEOs and newbie's (so called SEOs out there) . Google is a search engine for people it's not for you to play with.

Labels: Google, search engine articles, Webmaster News

Google webmaster tools new features:

- One-stop Dashboard: We redesigned our dashboard to bring together data you view regularly: Links to your site, Top search queries, Sitemaps, and Crawl errors.

- More top search queries: You now have up to 100 queries to track for impressions and click through! In addition, we've substantially improved data quality in this area.

- Sitemap tracking for multiple users: In the past, you were unable to monitor Sitemaps submitted by other users or via mechanisms like robots.txt. Now you can track the status of Sitemaps submitted by other users in addition to yourself.

- Message subscription: To make sure you never miss an important notification, you can subscribe to Message Center notifications via e-mail. Stay up-to-date without having to log in as frequently.

- Improved menu and navigation: We reorganized our features into a more logical grouping, making them easier to find and access. More details on changes.

- Smarter help: Every page displays links to relevant Help Center articles and by the way, we've streamlined our Help Center and made it easier to use.

- Sites must be verified to access detailed functionality: Since we're providing so much more data, going forward your site must be verified before you can access any features in Webmaster Tools, including features such as Sitemaps, Test Robots.txt and Generate Robots.txt which were previously available for unverified sites. If you submit Sitemaps for unverified sites, you can continue to do so using Sitemap pings or by including the Sitemap location in your robots.txt file.

- Removal of the enhanced Image Search option: We're always iterating and improving on our services, both by adding new product attributes and removing old ones. With this release, the enhanced Image Search option is no longer a component of Webmaster Tools. The Google Image Labeler will continue to select images from sites regardless of this setting.

Webmaster tools has now many new features, when you sign into webmaster tools you will see a new home for your site with a message center, and all the sites that you have. To reach a verified site there a one stop dashboard this gives you all the highlights from the data. You can now get your favorite features easily, more navigation and trouble shoot problems can be seen, additionally you can now see more search queries for your site that appears in better than never before. You have robots.txt and URLs in access for some time. But now all tools are together at last under one tab. We already sent messages to your site to webmaster tools Inbox now you can forward those messages to people you know. We all really enjoyed redesigning webmaster tools. This is just a beginning stay tuned for more updates.

Labels: Google, Webmaster News

Webmaster claims in webmasterworld yahoo directory listing stays free

Here are a few tips for evaluating a Yahoo directory listing:

1. Do a Y! directory search first to find the most appropriate category for your listing. Don't choose the one you want because of PR or how high up it is, because the editor will probably place your site in the most appropriate category.

2. Ask yourself - how many other sites are listed in this category? Is my link likely to be buried on Page 2 or 3, or is it more likely to be near the top of page 1? Does the category itself have any Google search rankings for keywords relevant to your site? If so you may be able to get traffic from it.

3. From an SEO perspective, does the category have PageRank? Usually top tier categories have some decent PageRank, but as you go down deeper into the directory they don't. Number of outgoing links in a category is an issue here too. If you're likely to be buried on the 2nd or 3rd category page there will be less value.

4. From an SEO perspective relevance is important - how relevant is this directory category to my keywords?

5. Trust factors - is that category linking out to other good sites, or linking out to some expired domains or bad neighborhoods? Y! directory is one of the oldest most trusted web directories so there is usually some trust value associated with a link from here.

So as you can see, there are some instances where a Y! directory link will be valuable and others where it won't, it just depends on your niche.

Labels: Webmaster News

Offensive Google AdSense Ads Showing Up On Publisher Sites

This has caused him to remove Google AdSense from his site. It was an illegal Ad. It is not the first time; another man who is running Google AdSense on the children's website discovered adult and teen gay chat room ads on their site. When the parents of those children complaints they said it is Google's responsibility.

At the though the parents stop visiting the site, ultimately it was the mistake of the publisher. What the publisher do next? He actually stopped using the Google AdSense.

The most specific ad from the Asian Women is likely to be just some overseas bridal program. This issue is very offensive and so Google should consider filtering further. Again, by association, the publisher gets held responsible and Google is bound to lose revenue when the publisher pulls out of the AdSense program.

Labels: Webmaster News

Update from Google from our newsletter

On demand site map for Custom Search… It's all about the custom search. Webmasters who submit sitemap to webmaster tools always get a very special treatment. The customs Search identify the submitted sitemaps and indexes URLs from these site maps into a separate index for higher quality Custom search results. They interpret your CSEs pick up the appropriate sitemaps and they figure out which URLs are relevant for your search engines for enhanced indexing. This brings you a dual benefit of great discovery for Google.com and more comprehensive coverage in your own CSEs. Today they have put a step forward in improving your experience with Google Webmaster services with the launch of On-Demand Indexing in custom search. With the help of this you can tell them about the pages which are new or which are significant and have changed and custom search will immediately schedule them for crawl and index and serve them in your CSEs normally within 24 hours, often much speedy. Further this also gives important points to be bared in mind. Third update is a very interesting one...Its all about the SEO starter Guide. It covers around a dozen common areas that webmasters might consider optimizing. They felt that these areas would apply to webmasters of all experience levels and sites of all sizes and kinds. All through the guide, they also worked in many instances, pitfalls to shun, and links to other resources that aid expand their explanation of the topics. They also plan on updating the guide at regular intervals with novel optimization suggestions and to keep the technical advice current. Spookier than malware… This update is all just fun. It's about their Halloween celebration by the Webmaster central team!

Reflections on the "Tricks and Treats" webmaster event: It's about how did it go, what's next, a big thank you and few presentations by people of Google Groups. It was an exciting, fatiguing, and educational event. They're aware that the sound quality wasn't great for some folks, and they've also prized quite-helpful constructive criticisms in this feedback thread. Last but not least, they are bummed to admit that someone but forgot to hit the record button. In what's next well, for starters, all of us Webmaster Central Googlers will be spending fairly some time taking in our feedback. Some have requested sessions completely covering particular (pre-announced) topics or tailored to specific experience levels, and they've also heard from many webmasters outside of the U.S. who would love online events in other languages and at more suitable times. No promises, but you can bet we're eager to please! And next is a big thank you where they thank all the fellow Googlers. And finally end up with few presentations as said earlier by people of Google groups. Malware?? Don't need any stinking malware! This explains about a main sentence which we often find while browsing. "This Site May harm your Computer". All in all it explains in detail the meaning of malware label and how one does gets rid of it when it is found in their site by Google Scanners. It tells out all the points so clearly and also tells how to request a review via Google's Webmaster Tools.

Labels: Webmaster News

Webmasterworld guy needs help for his website

"Noticed a 50% Google traffic drop in one of my sites. Site has some 7 years and has some 170 pages in 3 different languages including English and Spanish. Several pages was #1 and others in first 10 positions mostly.

Other search engines seems to be ok with rankings including some Yahoo's #1.

Even now with half the usual traffic I get lots of bookmarks what is a good indicator to me.

Regarding Google: some pages are still #1 but other pages are dozens or hundred of pages down now for older keyphrase.

However I noticed that if I pick just some words of my keyphrase my page ranks in first 10 or 30 results, while using the old 2 words keywords phrase that used to rank #1, results in #300

I haven't changed site so I wonder why Google went from love to hate with those pages...

So what I'm doing is "deoptimizing" pages, lowering keyword density and wait, but I'm not sure about it. I'm assuming a kind of -950 penalty http://www.webmasterworld.com/google/3215939.htm

Perhaps I just need to wait (in case my case matches this: http://www.webmasterworld.com/google/3669390.htm )

Site was banned in wikipedia months ago, but never had problems with google about it and I don't think Google use wikipedia to consider a site good or not. "

Can you help join this thread, webmasterworld.com/google/3705564.htm

Labels: Webmaster News

Google now reads text in pages - boost to their algorithm

https://www.google.com/search?hl=en&q=site%3Awebmasterworld.com+google+keyword+tool you can see the specific data under some search results. I feel this is a major step forward

Labels: Webmaster News

webmaster central tools now in other languages,

"We're always working for new ways to make life a bit easier for webmasters. We've had great feedback to many of the initiatives that have taken place in Webmaster Tools and beyond, but given the complex nature of managing a website, there are some questions regarding the tools that come up quite often across the Webmaster Help Groups. This got us thinking: how can we best address these questions? Well, if you're like me, then you find it a lot easier to learn how to use something if you actually get to see someone else doing it first; with that in mind, we'll launch a series of six video tutorials in French, German, Italian and Spanish over the next couple of months. The videos will take you through the basics of Webmaster Tools as well as how to use the information in the tools to make improvements to your site and hence your site's visibility in Google's index. Our first video provides an overview of the different information you can access depending on whether you've verified ownership of your site in Webmaster Tools. We'll also explain the different verification methods available. And just to whet your appetite, here are the topics covered in the series: Video 1: Getting started, signing in, benefits of verifying a siteVideo 2: Setting preferences for crawling and indexingVideo 3: Creating and submitting SitemapsVideo 4: Removing and preventing your content from being indexedVideo 5: Utilizing the Diagnostics, Statistics and Links sectionsVideo 6: Communicating between Webmasters and Google"

Google now provides webmaster tools in

Italian, french, spanish and other languages,

Labels: Webmaster News

Google update september 2008 webmasterworld forum discussion.

A member says

"

I think Google is weeding old or stagnant pages out of the index to make way for new pages, it is the only way they can keep up with the internet IMO. I recently did a search for a topic from 2002 and it was like going back into the stone ages in search. Everything now is what is happening today, not years ago. I don't know what all your sites are about but even on the top sites it seems they weed the pages.

For example I ran a search for an electronics product from 2000, only 8 years ago. You can barely find traces of it in the sites I searched via Google. Now do the same search from a product from today, say the iphone. There is probably a billion pages on that. Now I am not saying they are doing things wrong, but with the millions of pages added every day to the internet they have to delete or else run out of space perhaps. I just wish they had the ability to search the archives easily for the topics or products that are "old". Right now you can do that with Google news but not Google search.

Anyway my point is I think Google looks at a site and compares all the content, then keeps some of the most recent content in the results including the higher PR stuff and puts the older stuff in supplemental. That is only a guess but seems to be what is happening.

Since the older stuff I looked for was probably dropped into the deepest parts of these sites I couldn't find it with Google anymore.

Maybe though this is the way the internet search will be, you use if for todays content only. If they had to archive all our sites I don't think it is possible, not with all the pages being added."

http://www.webmasterworld.com/google/3736037.htm

Labels: Webmaster News

No Longer Have Badware - Badware reinclusion request

1) If you have badware, it usually means that your web server, your website, or a database used by your website has been compromised. Google has a nifty post on how to handle being hacked. You should be very careful when inspecting for malware on your site so as to avoid exposing your computer to infection.

2) Once everything is clear and dandy, you can follow the steps in our post about malware reviews via Webmaster Tools. Please note the screen shot on the previous post is outdated, and the new malware review form is on the Overview page and looks as shown below:

(Pic.)

3) Lastly, if you believe that your rankings were somehow affected by the malware, such as compromised content that violated the Webmaster Guidelines [i.e. hacked pages with hidden pharmacy text links], you should fill out a reconsideration request. To clarify, reconsideration requests are usually used for when you notice issues stemming from violations of our Webmaster Guidelines and are separate from malware requests.

If you have any additional queries, you can review their documentation or can post to the discussion group with the URL of your site. This updated feature in webmaster tools is very efficacious in discovering & fixing any malware related problems.

Source: Google Webmaster Central Blog

Labels: Webmaster News

Interesting post in webmasterworld on how punctuation in keywords affect results.

Read the post

"Various punctuation characters have a noticeable impact on search results - mostly from a searcher perspective. As a webmaster, you may find that your users include punctuation in some keywords, and so it can be of use to know what the effect on the results they see is. And besides, knowing how to search Google is one step towards understanding how Google works. This is a spot check of the current handling of punctuation by Google.

Indexed punctuation

Key_word

Underscores are treated as a letter of the alphabet, which is why you can search for an underscore directly. Use underscores in content if your visitors include an underscore when searching (e.g. if you had a programming site).

Key&word

Ampersands or 'and symbols' have fairly unique handling. They're both indexed and also treated as the equivalent of word "and". If there are no spaces separating the symbol and the adjacent letters, the search results are an approximate equivalent of combining results for ["key and word"] and ["key & word"] (note the phrase matching). Use ampersands in copy as is natural for your target audience.

Explicit search operators

Many punctuation characters are explicit search operators, with a documented effect on results. Search operators are not indexed (or at least, they can't be searched for) and so are usually treated as word separators when found within website copy:

Key¦word

An (unbroken) pipe character is the equivalent of boolean OR: a search for [key OR word]. It can be a handy shortcut when conducting complex queries.

Key"word

A double quote triggers an exact or phrase search for the proceeding words (whether you include a closing double quote or not). So in this instance, it's the equivalent of a search for [key word] since a single word can't be a phrase. ["key word] is the same as searching for ["key word"].

Key*word

An asterisk is a wildcard search for zero or more words: [key ... word]. Putting numbers on both sides will trigger the calculator. Occasionally, Google delivers (strange!) results if you search for an asterisk directly.

Key~word

A tilde triggers Google's related word operator - in this instance, a search for both 'key' and 'word', as well as other words related to 'word' - like 'Microsoft', 'dictionary' and others.

Search operator oddities

Key-word

A hyphen (as is probably consistent with language use) returns a mix of results for the words both used separately, and joined together - somewhere between [key word] and [keyword]. It's the preferred word separator within website URLs, since other punctuation characters that are treated as a word-separator have specific functions within a URL.

Others

A few punctuation characters have a strange impact on results - returning far fewer results than for either separated or concatenated words. They are neither known search operators, or indexed characters. These are . / \ @ = :

As far as I'm, aware, all other punctuation characters are treated as simply a space or word separator.

So, do I have too much time on my hands? Probably. But why not confuse whoever looks at Google's search logs by trying a few punctuation searches yourself? ;)

Do you know any punctuation with an effect on results not discussed here, or more about the effect on results of the punctuation above? "

Labels: Google, Webmaster News

Adobe provides technology to Google and yahoo to crawl flash

"Adobe Systems Incorporated (Nasdaq:ADBE) today announced the company is teaming up with search industry leaders to dramatically improve search results of dynamic Web content and rich Internet applications (RIAs). Adobe is providing optimized Adobe® Flash® Player technology to Google and Yahoo! to enhance search engine indexing of the Flash file format (SWF) and uncover information that is currently un discoverable by search engines. This will provide more relevant automatic search rankings of the millions of RIAs and other dynamic content that run in Adobe Flash Player. Moving forward, RIA developers and rich Web content producers won't need to amend existing and future content to make it searchable — they can now be confident it can be found by users around the globe."

Read press release here

Labels: Other Search Information, Webmaster News

Mattcutts reiterates Yahoo Directory has plenty of Pagerank Internally.

Most probably the reasons for Grey Pagerank is one of the reason we addressed in our article. Now webmaster world members are thinking Google has purposely imposed a Grey bar penalty for yahoo directory to reduce the Competition. For major sites like Yahoo directory Grey pagerank display could just be the problem inner pages are facing for many established sites. Google has imposed some sort of automated filter for inner pages of a site if the pages don't have good external links or doesn't feel its valuable. We see this across many of our pages in our site. I feel this is just temporary and Matt Cutts has cleared this up.

Matt Cutts replied this thread " It looks like it's just a matter of canonicalizing upper vs. lowercase as to why some of the subdirectories look the way they do in the toolbar. I just wanted to reiterate that the Yahoo Directory has plenty of PageRank in our internal systems."

Labels: Mattcutts, Webmaster News

Sergey plans his space invasion

Labels: Google, seo cartoons, Webmaster News, website

Losing Viacom Lawsuit might cost 250$ Billion for Google.

Google responded strongly in their counter filing in Court. Google's filing says "By seeking to make carriers and hosting providers liable for Internet communications, Viacom's complaint threatens the way hundreds of millions of people legitimately exchange information, news, entertainment, and political and artistic expression," . We strongly supported Google's claim.

Doing further research on Viacom's claim we were able to find some hard core facts. What currently looks like a small lawsuit might burst into a major Nuke explosion if Google ever looses the lawsuit. Viacom claims Youtube has more than 150,000 copyright videos and it claims a Billion dollar for it. So what happens if Google wins this lawsuit i am sure other companies won't sit quite.

Companies like CBS, NBC will jump in and will claim their own damages like this Google has to faces lots of companies around the world. I am sure just a lost lawsuit in Youtube will cost Google 50 Billion dollars if other companies claim their share.

Google is a search engine which was developed using other web site's information in their search results. They do have a cache of copyrighted pages crawled on the web so if Youtube is wrong then Google search is wrong too and with all the billions of sites out there Google will go Bankrupt. I estimate Google will need to pay 200 Billion Dollars. Google news also faced a recent major lawsuit from Belgium newspaper group. They want more than 75 million dollars since their news appeared in Google news.

Its Seems Everyone needs a piece of the Google PIE.

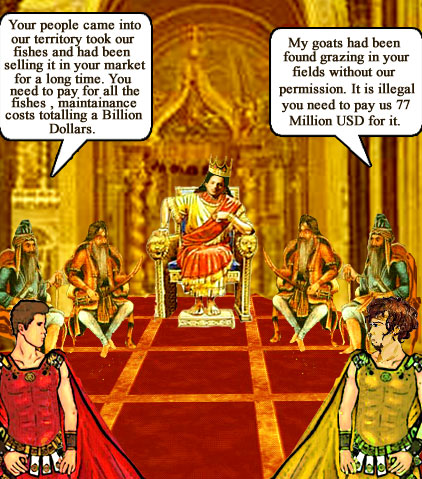

Look the following setup of images i tried to portray the 2 lawsuits.

This image shows Google Kingdom and does it look like Sergey brin on the King's chair ;-)

This is Google country guys fishing in Viacom territory. Is Google responsible for this?

Google country guys selling fishes that are taken from Viacom territory river.

This image criticises the Belgium Newspaper group. Goats from the Belgium Newspaper group territory are grazing in Google's territory.

Google's Market share / wealth

Viacom and Belgium Newspaper claim

Vijay

Labels: Search Engine Cartoons, search engines, Webmaster News

ICANN Sends out warning to registrars with Lot of spam

Recently ICANN sent out warning to Domain registrars who handle lot of spam domains. Worst spam offenders are found out by a survey and they are sent out notices to stop spam in their registration or face ban.

"ICANN has sent enforcement notices and notices of concern to certain registrars, including those reported this week as being the registrars for the majority of websites advertised in spam emails.

Earlier this week, an investigation by KnujOn, widely reported online, publicly identified 10 registrars as being the companies used to register the majority of domain names that have since appeared in spam email messages.

More than half of those registrars named had already been contacted by ICANN prior to publication of KnujOn's report, and the remainder have since been notified following an analysis of other sources of data, including ICANN's internal database.

With tens of millions of domain names in existence, and tens of thousands changing hands each day, ICANN relies upon the wider Internet community to report and review what it believes to be inaccurate registration data for individual domains. To this end, a dedicated online system called the Whois Data Problem Report System ("WDPRS") was developed in 2002 to receive and track such complaints.

"ICANN sends, on average, over 75 enforcement notices per month following complaints from the community. We also conduct compliance audits to determine whether accredited registrars and registries are adhering to their contractual obligations," explained Stacy Burnette, Director of Compliance at ICANN.** "Infringing domain names are locked and websites removed every week through this system."

Although the majority of registrars offer excellent services and contribute to the highly competitive market for domains, ICANN's compliance department has developed an escalation process to protect registrants and give registrars an opportunity to cure cited violations before ICANN commences the breach process.

However, while registrars are responsible for investigating claims of Whois inaccuracy, it is not fair to assume a registrar that sponsors spam-generating domain names is affiliated with the spam activity. A distinction must be made between registrars and an end user who chooses to use a particular domain name for illegitimate purposes.

"But if those registrars, including those publicly cited, do not investigate and correct alleged inaccuracies reported to ICANN, our escalation procedure can ultimately result in ICANN terminating their accreditation and preventing them from registering domain names," Ms Burnette said. "

Labels: Webmaster News, website

Theplanet datacenter restored in batches

Power in H1 Phase 1 has been restored. We are starting to turn customer servers on in batches.

Labels: Webmaster News, website

Theplanet's datacentre - Largest dedicated server provider down

Update Note from them

"This evening at 4:55pm CDT in our H1 data center, electrical gear shorted, creating an explosion and fire that knocked down three walls surrounding our electrical equipment room. Thankfully, no one was injured. In addition, no customer servers were damaged or lost. We have just been allowed into the building to physically inspect the damage. Early indications are that the short was in a high-volume wire conduit. We were not allowed to activate our backup generator plan based on instructions from the fire department. This is a significant outage, impacting approximately 9,000 servers and 7,500 customers. All members of our support team are in, and all vendors who supply us with data center equipment are on site. Our initial assessment, although early, points to being able to have some service restored by mid-afternoon on Sunday. Rest assured we are working around the clock. We are in the process of communicating with all affected customers. we are planning to post updates every hour via our forum and in our customer portal. Our interactive voice response system is updating customers as well. There is no impact in any of our other five data centers."

As you know, we have vendors onsite at the H1 data center. With their help, we've created a list of equipment that will be required, and we're already dealing with those manufacturers to find the gear. Since it's Saturday night, we do have a few challenges. We are prioritizing issues as follows:

Getting the network up at H1 is first and foremost. We're pulling components from our five other data centers – including Dallas – which will be an all-night effort.

Getting power back to the data center is key, though it is too early to establish success there.

Because Server Command is in H1, our legacy EV1 customers are blinded about this incident. We are in the process of moving the Server Command servers to other Houston data centers so that we're able to loop them into communications.

We absolutely intend to live up to our SLA agreements, and we will proactively credit accounts once we understand full outage times. Right now, getting customers back online is the most critical.

Labels: Webmaster News

Google has more than 200,000 Servers

"Google doesn't reveal exactly how many servers it has, but I'd estimate it's easily in the hundreds of thousands. It puts 40 servers in each rack, Dean said, and by one reckoning, Google has 36 data centers across the globe. With 150 racks per data center, that would mean Google has more than 200,000 servers, and I'd guess it's far beyond that and growing every day. "

Source: http://news.cnet.com/8301-10784_3-9955184-7.html

Labels: Google, Webmaster News