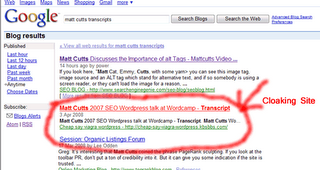

Cloaking still works in Google Blog search – cloaking in Google

I was surprised to see Cloaking still works in Google or atleast in Google blog search, When I was searching today for our blog post which usually features in Google blog search as as we make an entry we came across a really weird site, It showed some snippet in search results which was very interesting to click but when we click on the site it redirects to a via-gra spam site

This is the spammer’s website check the redirected and cloaked URL,

This is the spammer’s website check the redirected and cloaked URL,

So how will Google stop spamming and cloaking in blog search

Got to wait and see,

Search Engine Genie.

Matt Cutt Discusses Snippets – Mattcutts Video Transcripts

So just to remind everybody, I am visiting the Kirkland office and they said you know what lets grab a video camera and just talk about a few things and have a little bit of fun and put these videos up on the web.

So one of the things that we thought we’ll talk about is a snippet, what are the different parts of the snippet? How do we choose which part of the snippet to show and not show?

So since we are up in the specific North West let’s talk a little bit about Walkthrough this snippet of starbucks and what that it looks like and talk through the different parts.

Alright the first thing you’ll see is the title and that’s typically what you set on the title of your webpage. So “starbucks Homepage” is what Starbucks use for www. Starbucks.com. Now in general Google reserves the rights to try to change the snippet and make it as useful as possible for users always doing all kind of different experience. Like is it more helpful to show two dots or three dots, you want to end with dots, you want to start with leading spaces, how do you find the most relevant part of the page? And try to say this is what we should be showing. But the majority of the time you have a great deal of control of how about how things get presented.

So in this case starbucks uses the title “Starbucks Homepage” and just a quick bit of SEO advice for starbucks, “Home page” na a few people might search for that but I might say something like “Starbucks coffee” where people are more likely to search for that okay enough of the free advice for starbucks. The next thing that you see is something that we call as the snippet

So next thing that you see is something that we call has the snippet, “Starbucks coffee company is the leading retail coaster and brand of specially da dad

Now where does that snippet come from? It can come from many different places, suppose for example we weren’t able to call the URL, May be for whatever reason it was down and we couldn’t get a copy of it, we don’t have anything from the page not even the Meta description tag nothing at all. In those cases we sometimes do rely on the open directory project. So starbucks I wouldn’t be surprised if it’s in the open directory so if we weren’t able to call the page we might pull the description form there. Another thing we sometimes do is pull the description from a place within the page. So suppose you got a phonebook and you’re looking for somebody’s name and the name is way down at the bottom it’s a lot more helpful to show that person’s name from the bottom of the page and may be a few words from either side of that person’s name than it is to show like the first fifty words of the page, so we do try to find the most relevant parts of the page Some times its single snippet, sometimes its multiple parts of the page and combine that together and people are little bit of context that this page is really I am looking for. But neither of these two is at the open directory project or directly from the within the content of page body itself. And in this case I looked into it and view source that this is the Meta description tag.

So we did post on the Google webmasters blog just for a while that how these snippets get picked, and it turned out you can use your own Meta description tag and in many cases that is exactly what we will choose to use as a snippet. But you want to be careful because this is a very fine snippet, but maybe there is some other snippet that can work better. You know people would read it and say oh I really want to click through and find out more about that. You can experiment with different Meta descriptions and see that the one that gets more clicks is the one that works better. But we use several different sources of data when deciding how to pull things together. In this case starbucks is also a company so we show a little + box where if you want you can click the plus box and you can expand and see a stock chart for Starbucks. You can see if they are doing well in the market.

There are a lot of these different options. For example if you are having an address on your page many times it will show a plus box and it will say view a map of and then you can have your address. And we are always looking for new ways to surface interest in data. If you go to google.com/experimental we do have views where you can look at search results on a time lines, search results on a map, you can even see all the search results for images and even measurements. So if you going to search for Koalas or Koala bear, you can say show me the measurements. Then it will show you all the things like oh Koala bears are 20 pounds and stuff like that which is really helpful.

In general or whatever interesting information you have on your page that user will be interested in well try to surface that or show relevant information like stock quote or stuff like that. There is also something like that’s a little subtle and unnoticed is that we have bolded the starbucks, that’s because someone has queried for starbucks. So often times if you do a query and if those keywords are on the page, well make them bold so that people know what you typed is actually on that page. We know about morphology, we know about synonyms. If you typed in car, we can sometimes return search results that have automobiles. But that wouldn’t be bolded, or wouldn’t be likely to be bolded and what more likely is whatever you typed in is what’s going to be bolded. So in that case it gives you little more information and shows how relevant that page.

Working down a little bit you can see the URL which you are actually going to end on, 12K stands for 12 kilobytes which is relatively small page that means it will load pretty quickly. And then you see the cached links, imagine for example the site is down. May be you are the webmaster of the page and you accidently deleted it, but then you can look at the cache page and you could recover the source of that page, then you could put it back up again. The cache page also has really interesting features. If you click on it, It shows us the last crawled date, you can say ok well today is October 7th look at the cached page and oh we last crawled the page on October 6th then you know how precise the search results are.

Sometimes if we are very precise, then we can show an indicator right on the snippet here below ( next to 12k) that we crawled 17 hours ago to let you know that we are very precise.

Similar Pages shows you related pages to start about may be other businesses or other pages that you might be interested in. And a lot of time if you are logged in to Google you will “NOTE THIS”

If you are a student of if you are doing research it’s really handy it works with Google note book and all it does is that it saves this off, as I am doing my research I want to save this result can be able to come back to it and may be aggregate it later and may be aggregate all the stuff together on some research chapter.

And then this is really nice, this is what actually to be a little indented on the snippet. But what we call this is site links. There are a couple of things we need to know about site links. First off, no money is involved. Somebody always asks, “So did starbucks pay some money to get them?” No it’s purely algorithmic.

And the second thing is it is purely algorithmic it’s not done by hands so it’s not like we go to store box and say may be we are interested in store locator and then nutrition and stuff like that. But there is a lot of sophistication going on here for example on this page the title is actually Starbucks Store Locator but you don’t need to see that most of the times so we can say store locator and if you look at this page the title is actually like Beverage details and something and in fact the link to that page is nutrition.

So we are sort of selective we try to pick the sort of little description that gives people enough information and make sure where they can say “Oh! The store locator is what I want and I am going to go directly there” or I wanted to find out if I get a Mocha how many calories is that so I can go straight to the nutrition. So it’s completely algorithmic and no money is involved in that. And then when we get to the bottom if we have a lot of results for a page may be we show one or two and then we will say you know what may be you want to see more results from Starbucks and what that lets you have is a more diversity so you can see one or two results from starbucks and maybe you want to see other results for that query. That sure helps in clear diversity of that page and at the same time lets you dive deeper if you want to.

So that’s a very quick tour on what a Google snippet is. Hopefully it was helpful.

How to structure a site? – Mattcutts Video Transcript

Ok As you can this is the closest I can get to a World Map, Did you know there are 5000 languages spoken across the globe, how many does Google support? Only about a 100 still a long way to go.

Alright, Lets do some more questions. Todd writes in he says Matt I have a question, One of my client is about to acquire a domain name very related to their business and has a lot of links going to it. He basically wants to 301 redirect to the final website and the acquisition. The question is will Google ban or impose a penalty for doing this 301 redirect. In general probably not you should be ok, because you specify its closely related, anytime there is a actual merger of two businesses together, two domains very close to each other do a 301 redirect and merge together its not a problem. However if you are a Music site and you are suddenly getting links from Debt consolidation and online cheap YAHYAHYAH, that should be problem but what now you have planned to do is fine and you should be ok.

Barry writes in “What’s the right way to theme a site using directories you put the main keyword in the directory or on the index page? If you are using a directory do you use a directory for each set of keywords?

This is a good question, I think you are thinking too much about your keywords and not about your site , this is just for me I prefer a tree like architecture, so everything branches out even, nice like a branch sort of thing and also it will be good if you are breaking down by topics so if you are selling clothes and you have sweaters as one directory and shoes as an other directory and something like that, if you do something like that what you will end up with is your keywords do end up in directories, So as far the directories vs the actual Html file it doesn’t matter with Google screwing up with it, So actually I think if you break it down by topic and make sure that your topic is broken down by keywords. Then I think if your user type of keywords and find your page then you are in pretty Good shape.

Aright Joe writes in, If a Ecommerce site has too many parameters say it has punctuation marks, dots etc and its un index able is it ok to be within Google’s guidelines and serve static html pages to Googlebot to index instead. This is something that I will be very careful of because if you end messing this up you will be doing something called cloaking which showing different content to users and different content to Googlebot and you need to show the exact same content to both users and Googlebot. So my advice is to go back to the question I asked about whether or not the parameters in URLs are indexable and unified so that both users and Googlebot see the same user directory. And if you are going to do something like that, definitely that’s going to be much better saying that what ever html paying you are going to show to the Googlebot , if users go to that page and if they stay on the same page and not redirected or sent to an other page then you are fine. They need to see the exact same page that Googlebot saw that’s the main criteria you got to be careful about that.

John writes in he says “I would like to use AB split testing on the static html pages, will google understand my PHP redirect for what it is or will Google penalize my site for assumption of Cloaking, If there is a problem is there a better way to split test?. That’s a Good question if you can I would recommend split test in a area where search engines aren’t going to index it. Because when we go to a page and you reload and show different content then it does look a bit strange so if you can please use robots.txt or .htaccess file or something that Googlebot doesn’t index it. Saying that I wouldn’t do a PHP redirect , I will configure in a server to serve 2 different pages parallel. One thing to be careful about and I touched on this a while ago in a previous session that you should not do anything special for Googlebot just treat it like regular user that’s going to be the safest thing in terms of not being treated as cloaking.

And lets wrap up, Todd asks an other question he says hi Matt here is the real question, Ginger or Marian? I am going to go with Marian.

Why we prepared this Video transcript?

This transcript is copyright – Search Engine Genie.

.

Feel free to translate them but make sure proper credit is given to Search Engine Genie

Qualities of a good site – Matt cutts Video Transcript

Hello again lets deal with a little more questions, I hope its work lets give it a shot. Raf writes in some comments on Google sitemaps please. He says does updates on sitemaps depend on page view of the site? I feel that’s not the case page views are not the factor on how things are undated in sitemaps, you know there are different pieces of data in sitemap so imagine you know there are a file of different sets of data. They could all be updated in different times and in different frequencies and typically they should be updated within days or worst case within weeks however as far as I know it doesn’t depend on page views.

Lets deal an other one “What are your basics ideas and recommendations on increasing sites ranking and visibility in Google? ” Ok this is a meeting topic definitely a longer issue ok so lets go ahead and dive into it? So Lets go and see the number one thing most people make mistake on SEO is the they don’t make the site crawlable. So I want you to look at your site in search engines eyes or user text browsers do something and go back to 1994 and use lynx or something like that. If you could get through your site only in text browser you are going to be in pretty good shape, because most people don’t even thing about crawl ability. You want to also see on things like sitemaps on your site or also you can use our sitemaps tools in addition to that once you got your content, content that is good content, content that is interesting, content that is reasonable that’s attractive and that will make some actually link to you and then once your site is crawlable then you can go about promoting, marketing, optimization your website. So the main thing that I would advice or thing about the people who are relevant to your niche and make sure they are attracted. So if you are attached to a doctor since you run a medical type of website make sure that doctor knows about that website if he knows about your site it might be appropriate for him to link to your website.

You also should be thinking about a hook some thing that holds your visitors it could be really good content newsletters, tutorials, I was trying to setup all these video stuff trying to make it look semiprofessional and there is tutorial by a company called photo flex something they said here is something like keylike, throw etc and BTW they say you need to by our equipment to do that. That’s really really smart, infact another photography site that I went to I saw they syndicated the other site tutorials to add on their website. That could be a great way to get links you can also You should also think on places like reddit, digg, Slashdot you know social networking sites myspace this sort of stuff. Fundamentally you need to have something that sets you apart from the pack once you have something like that you are going to be in very good shape as far as promotion your site is concerned. But the biggest step making sure your site is crawlable after that , making sure you have good content and finally make sure you have a hook which makes people really love a site return to it and really bookmark it.

Alright lets do an other one “what condition asks

Alright this one is a good one. Laura McKenzie says does Google favor bold or strong tags. In general we probably favor bold a little bit more but just to say it more clear its so slight that I wouldn’t really worry about it. When you do it do what ever is best for yours and oh I don’t think its going to give a little bit of boost in google or anything like that. Like I said its relatively small so I recommend you do what is best for users and what ever is best for your site and then not worry about that much after that.

I think that’s it,

Thank you,

Why we prepared this Video transcript?

We know this video is more than a year old but still there are people who have questions about their site and want to listen from a Search Engine Expert. Also there are millions of Non-English people who want to know what’s there in this video so a transcript is something that can be easily translated to be read in other languages. We know there are people with hearing disability who browse our site this is a friendly version for them where they can read and understand what’s there in this video.

This transcript is copyright – Search Engine Genie.

Feel free to translate them but make sure proper credit is given to Search Engine Genie.

Percentage of share for top 500 websites over other sites in internet

I see some people discuss on the market share between the top 500 websites in internet with all other remaining millions of websites. So where do we get a credible data to understand this, Alexa has a list of top 500 websites. But if you see the history of alexa their results are easily skewable since their results come from millions of ALexa toolbar users around the world. How can toolbar data be accurate so who are all the millions of Alexa toolbar users do the represent the real internet users?

In my Opinion No, Alexa users are mostly site owners, webmasters, techies etc. Mostly its a webmaster baised traffic its never a reliable one to gauge the real traffic to a website. Though too much baised still Alexa is a good place to start. Some the sites that are listed in Alexa top 500 websites are the best in internet. So I wouldn’t complain too much on alexa.

If you do a Google search for http://www.google.com/search?hl=en&q=top+internet+websites

top websites you will come across a bunch of good lists which will give the top sites in internet. But its very difficult to understand and analyze what type of traffic a real site gets unless those sites care to share their log data which I feel is never possible

Search Engine Genie

How to stop my site from showing up on non-US Google results

When I was going through webmaster world Google forum I saw this weird post

How to stop my site from showing up on non-US Google results

Wow one second it took me by surprise I seriously want to checkout why someone really wants to block access to their site from non-Google results. Here is the post from that person

“First, I want to make clear, I am not worried about bringing my page rank up at

all, I just want to stop showing up on non-US google results. So this isn’t

about SEO.

I have a site serving the SE United States. However, 80% of my

visitors come from Ireland, Israel, China, Japan, Italy, South America, and of

all places, Botswana?

I used some google tool (I can’t remember which) that

showed my page rank on non US google indexes varies from 4 to as high as 7.

That would explain the off-continent percentage, because I have a white bar

/ page rank 0 here. So…

1. Is there really a different feed for different

countries?

2.Can I keep google indexing for US users but not others?

3.Is it one googlebot gathering data for all places, or are there different

ones? (That would explain why there is some google-thing on my msg boards every

day. Or there is one google bot and he lives at my place.)

4.To block them

or it, do I have to know the name of every bot and spider by IP or nickname or

whatever?

5.Is that done by htaccess or robots.txt?

“

From reading the post it looks the poster really wants to stop traffic from all countries other than Google. I don’t think this is possible since google.com results are served in almost all countries though ranking might vary.

A good response by senior member lammert was made which was very informative

“Geographic targetting boosts ranking in one region compared to others, but it

doesn’t remove a site from foreign search results. My experience is that it has

no greater power than a country TLD like .de for Germany or .fr for France, or

hosting your site on an IP address which is locate in the country to target.

1. Is there really a different feed for different countries?

No, every

Google datacenter can produce the results for all countries and languages in the

world by just changing a few parameters in the search URL. Google tries to sort

the results based on relevancy, matching languages and geographic origin of

incoming links to a site, but in principle every URL can appear in every SERP on

every visitors location. There is no such thing as totally separate feeds.

2.Can I keep google indexing for US users but not others?

3.Is it one

googlebot gathering data for all places, or are there different ones? (That

would explain why there is some google-thing on my msg boards every day. Or

there is one google bot and he lives at my place.)

There is just one

Googlebot crawling for all countries and data centers. If you are on one Google

data center, you are practically speaking in all, because they exchange pages on

the fly. There is even data sharing behind the scenes between different Google

spider technologies. If Googlebot doesn’t visit a specific URL but Mediabot

which is used for AdSense ad matching is, the pages fetched by Mediabot may be

examined and used by Googlebot.

There is no way you can block your site from

showing up in Google results for one country and not for others, unless your

site is China related and happens to trigger a filter in Google’s China

firewall.

The only way to tackle this reliably is to block the foreign

visitors at your door, i.e. use some form of geo targeting where you map the IP

address of the visitor to a geographical location and allow or deny access based

on that. But geo targeting is not 100% reliable, especially with some larger

ISPs like AOL which use a handful of proxies for all their customers and you may

end up with some foreign visitors slipping through, and worse, a number of

legitimate visitors who can’t connect anymore.

My advice is not to fight the

battle against foreign visitors, but to monetize the traffic. Many people are

fighting for traffic and you–wanting to kill 80% of your traffic because it

doesn’t match the current content of the site–are really an exception. Why not

monetize this traffic in some way instead of blocking people? This is free

traffic which is in principal targeted audience, based on that they found you

through Google search, and not some form of shady traffic generation scheme.

“

In my view there is no use blocking users from other countries they can be a valuable resource at times our site www.searchenginegenie.com gets about 50% of the traffic outside US and we really enjoy that traffic as much as the US traffic we get. Traffic from France, Germany , Spain are very useful traffic since they love Search Engines and Search Engine Optimization a lot. There are some french forums which send us traffic to our tools or blog posting, traffic is sometimes 10 times more than the traffic sent to us by active forums like searchengine watch, Digitial point forums etc. This shows their passion towards online business, Search engine optimization and the art of making money online.

Pagerank update – Is there an update going on in Google

A webmaster world member has reported pagerank change to his site. It seems his site has lost some pagerank and is now downgraded from PR5 to PR3. This looks like the New Google “Toolbar Pagerank Reduction” or TPR policy. Google introduced TPR around December 2007 to go after text link buyers and sellers. Text link advertising which passes pagerank is against Google’s policy and they introduced TPR to tackle.

Lots of sites depend on Google toolbar pagerank to judge the value of a link apart of other things like number of backlinks in yahoo and Alexa ranking. Google toolbar pagerank is a very important when it comes to buying or selling these days. A PR9 link goes for around 800$ a month which is a huge amount for a link. I am sure this industry is not flourishing anymore due to the introduction of TPR. TPR reduced text link advertising to atleast 40 to 60% I would say. It sent bubbles in link publisher’s stomach and many advertisers who were selling links for their site or buying links to their site panicked and removed all of them to make sure they don’t loose any further trust in Google.

I know being part of a SEO company that this TPR affected lot of sites that didn’t buy links but inside I appreciate Google for taking strong efforts to protect their Algorithm from being manipulated through text link advertising. I have always said Text link advertising is for the rich and famous for competitive keywords and it should stop or real quality sites that don’t buy anchor text links will not get the exposure in Google that they deserve.

Forum discussion in webmasterworld.

Matt Cutts Discusses the Importance of alt Tags – Mattcutts Video Transcript

But the general problem of you know, detecting what an image is and been able to describe it, is really really hard; so you shouldn’t count on computer being able to do that, instead you can help Google with that. Now let’s see what this image might look like, if you look at the right this might be a typical image source “img src – “

DSC00042.JPG” you know, you got your image tag, u describe what the source is, here is DSC because it is a digital camera, you know blah blah blah 42.JPG, that doesn’t give us lot of information, right? You won’t be able to say this is cat with a ball of yarn we don’t want to say, here is number that gives a virtually zero information, if you go down a little bit, here is sort of information that we want to show up, you won’t be able to say this is Matt’s cat, Amy Cutts, with some yarn; right? & you know that’s not a lot of words but it adequate describes the scene, it gives you a very clear picture what’s going on.It includes words like yarn, a word like Emmy Cutts, which is all completely relevant to that image and it isn’t stuffed with tons of words like cat, cat, cat, feline, lots of cats, cat breeding, cat fur with all sorts of stuffs. So you want to have a very simple description, sort of included with that image; how do you do that? If you look here, “Matt Cat, Emmy, Cutts, with some yarn> you can see this image tag, image source and an ALT tag which stand for alternative text, and if so somebody is using a screen reader, or they can’t load the image for a reason, your browser can sow you this alternative text and you know it is very helpful for Google. Now you can see what’s going on, different people and people who are interested in accessibility can also get a good description what the image is, you are not spamming; this is a total of 7 words, if u got 200 words in your ALT text, you really don’t need a ton of words because 7 is enough to describe a scene pretty well. Right? If you get 20 or 25, that’s even getting a little bit out there, 7 is perfectly fine, you are talking whatz going with in the picture itself.

You can also look for alternative tags like tidal and things like that but this is enough to help Google to know whatz going on in the image. You can go in advance, you could think about naming your image something like ‘Cat and Yarn.JPG’ but we are looking for something light weigh and easy to do, adding an ALT tag is very easy to do and you should pretty much do it in all of your images, it helps your accessibility and it can help us (Google) to understand whatz going on in your image.

Why we prepared this Video transcript?

We know this video is more than a year old but still there are people who have questions about their site and want to listen from a Search Engine Expert. Also there are millions of Non-English people who want to know what’s there in this video so a transcript is something that can be easily translated to be read in other languages. We know there are people with hearing disability who browse our site this is a friendly version for them where they can read and understand what’s there in this video.

This transcript is copyright – Search Engine Genie.

Feel free to translate them but make sure proper credit is given to Search Engine Genie

Some SEO Myths – Mattcutts Video Transcript

Alright, I am trying to upload the last kick to the Google videos. So we will see how it looks while I am waiting I think I can do a few more questions and see if we can knock a few out I am realizing that with this video camera that I have got I can do about 8 minutes length of video before I get to the 100 megabytes limit then I have to use the client uploader so ill probably make it into chunks of 5 to 8 minutes each.

So Ryan writes – He says can you put us out of some myths where having too many sites on the same server, for having sites on IPs that look similar to each other, but having them include the same Javascript of a different site. In general if you are a average webmaster this is something that I haven’t have to worry about. Now I have to tell a story about Tim Mayer and I on a penalty panel. Someone said hey you took all my sites out he said both Google and Yahoo did and I didn’t really have that many, so tim saw that guy and asked so how many sites did you have?

And the guy looked little sheepish for a minute and then he said well I had about 2000 sites so well there is a range right? Say if you have 4 or 5 sites and if they are all different themes or different contents you are not in a place where you really need to worry about. But say if you have 2000 sites you ask yourself do you have enough value added content to support 2000 sites the answer is probably not. Its just that if you are a average guy I wouldn’t worry about being on the same IP address and I definitely wouldn’t worry about being on the same server that is something that everyone does. The last one Ryan asked about Javascript there are a lot of sites that do this, Google adsense is javascript included this is something that is common on the web I don’t have to worry about it at all, but now again if you have 5000 sites and you are including the Javascript that does some sneaky redirect then you need to worry but that is something that you do on a few sites that is entirely logical and using Javascript I wouldn’t worry at all.

Alright Aaron write in – its kind of interesting question? I am having a hard time understanding the problems that we face when we launch a new country. Typically we launch a new country with millions of new pages at the same time additionally due to our enthusiastic PR team we get tons of backlinks as well as press news during every launch. So they say the last time they did this they didn’t do very well they launched a site for Australia and they didn’t do very well at all.

Aaron this is a good question primarily because the answer to this somewhat changed since the last time we talked someone asked this question when we were in a conference in New-York and I said just go ahead and launch it you don’t have to worry about it , it may look a bit weird but it will be just fine. But I think if you are launching your site will millions of web pages you got to be a little more cautious if you can. In general if you are launching with that many pages its probably better to try and launch a little more softly so a few thousand pages and add a few thousand more and stuff like that it could very well be, millions of pages are a lot of pages. Wikipedia is like say how many 5 or 10 million pages so if you are launching that many pages make sure you find ways to scrutiny and make sure those are all good pages. Or you might as well find yourself not as good as you hoped for.

Alright quick question; classic nation writes in and says What’s the status on Google images and whether we will be able to hear about the indexing technology of the future?

Actually there was a thread about this on webmasterworld we just did an index update, just did I think last weekend for Google images, Actually I was talking to someone on the google images team they are always working hard, there is a lot of stuff you may have seen there might be new updates in future where we will be bringing new images that the main index has and stuff like that but they are always working on making Google images index better.

Static vs. Dynamic urls – Matt Cutts Video Transcripts

Hi everyone again, Alright here we go again I am learning something everytime I do one of these, For example its probably smart to mention today is Sunday July 30th 2006.

Alright Gerby writes in “Does Googlebot treats dynamic pages different than static pages? ” My company writes perl and there are query strings in URLs yahyahyah?

That’s a good question, my first opinion we do treat static and dynamic pages equally so let me explain that in a little bit more detail. Pagerank flows in dynamic URLs the same way they flow in static URLs so if you got nytimes linking to a dynamic URL you will get the pagerank benefit and will still flow the Pagerank benefit. There are other search engines in past who said ok we go one level deep from static URLs so we are going to crawl a dynamic URL but we are going to go one level in dynamic URL, so the short answer is pagerank still flows the same between a static and a dynamic URL, lets go into a more detailed answer. The example you gave has like 5 parameters and one of them is like a product ID 2725 and you definitely cant use too many parameters I would recommend 2 or 3 at the most if you opt for using them , not to go for too long numbers because we might confuse them with session IDs any extra parameters you can get rid of its always a good idea. And remember google is not the only search engine out there so say if you have the ability to do a little of Mod_Rewrite I am going to say make it look like a static URL and I am going to say this is a very good way to tackle a problem. But pagerank still flows but experiment if you see any URLs that has the same structure and same number of parameters as you will think of doing its probably better to cut short some number or parameters or shorten them in URLs, or try to use Mod rewrite. Alright Mark writes in this is an interesting question he has a friend who’s site was hacked he did not know about for couple of months because of they had taken it out or something like that. So he asks can google notify the webmaster of the site basically when its hacked within sitemaps and inform them maybe say that inappropriate pages were crawled. That’s a great question my guess is we don’t have the resources to have something like that right now in general if somebody is hacked if they have a small number of sites they monitor, they will get to know about it really quickly, the web host will alert them about it. So webmaster console team is really going to work on new things but my guess is this is really not right now in the priority list.

Ok james says, “Hey Matt , in the fullness of time I am going to use Geo-targeting software one that will give different type of messages to different type of people in different parts of the world. So for example this kind of pricing structure are we safe to use these type of Geo-targeting software clearly we don’t want to avoid any suspicions of cloaking. That’s a very interesting question, SO lets talk about that a little bit Google webmaster guidelines very clearly says showing different type of content than what you show to Search engines. Geo-targeting by itself is not cloaking under google’s guidelines. Because what you are doing take an IP address and hey you are from Canada we will show you this particular page, take the IP address hey you are from Germany we will show you this particular page, this thing that will get you in trouble is if you treat Googlebot as a special guest and do something special for it. So Geo-targeting for Google bot like Googlebotistan is bad. So what you can do is instead just treat Googlebot as a regular user. So if you are targeting by country and if Googlebot is coming from United states just show what people in United states will see so google for example does geo targeting we don’t think that’s cloaking its all about playing the cards pretty well. So again as I said cloaking is showing different contents to users and different contents to search engines. In this case just treat Googlebot as you treat like any other fact that they got this particular IP address and you should be totally fine.

Alright its time for another break

Why we prepared this Video transcript?

We know this video is more than a year old but still there are people who have questions about their site and want to listen from a Search Engine Expert. Also there are millions of Non-English people who want to know what’s there in this video so a transcript is something that can be easily translated to be read in other languages. We know there are people with hearing disability who browse our site this is a friendly version for them where they can read and understand what’s there in this video.

This transcript is copyright – Search Engine Genie.

Feel free to translate them but make sure proper credit is given to Search Engine Genie.

Blogroll

Categories

- AI Search & SEO

- author rank

- Authority Trust

- Bing search engine

- blogger

- CDN & Caching.

- Content Strategy

- Core Web Vitals

- Experience SEO

- Fake popularity

- gbp-optimization

- Google Adsense

- Google Business Profile Optimization

- google fault

- google impact

- google Investigation

- google knowledge

- Google panda

- Google penguin

- Google Plus

- Google Search Console

- Google Search Updates

- Google webmaster tools

- google-business-profile

- google-maps-ranking

- Hummingbird algorithm

- infographics

- link building

- Local SEO

- local-seo

- Mattcutts Video Transcript

- Microsoft

- Mobile Performance Optimization

- Mobile SEO

- MSN Live Search

- Negative SEO

- On-Page SEO

- Page Speed Optimization

- pagerank

- Paid links

- Panda and penguin timeline

- Panda Update

- Panda Update #22

- Panda Update 25

- Panda update releases 2012

- Penguin Update

- Performance Optimization

- Sandbox Tool

- search engines

- SEO

- SEO Audits

- SEO Audits & Monitoring

- SEO cartoons comics

- seo predictions

- SEO Recovery & Fixes

- SEO Reporting & Analytics

- seo techniques

- SEO Tips & Strategies

- SEO tools

- SEO Trends 2013

- seo updates

- Server Optimization

- Small Business Marketing

- social bookmarking

- Social Media

- SOPA Act

- Spam

- Technical SEO

- Uncategorized

- User Experience (UX)

- Webmaster News

- website

- Website Security

- Website Speed Optimization

- Yahoo